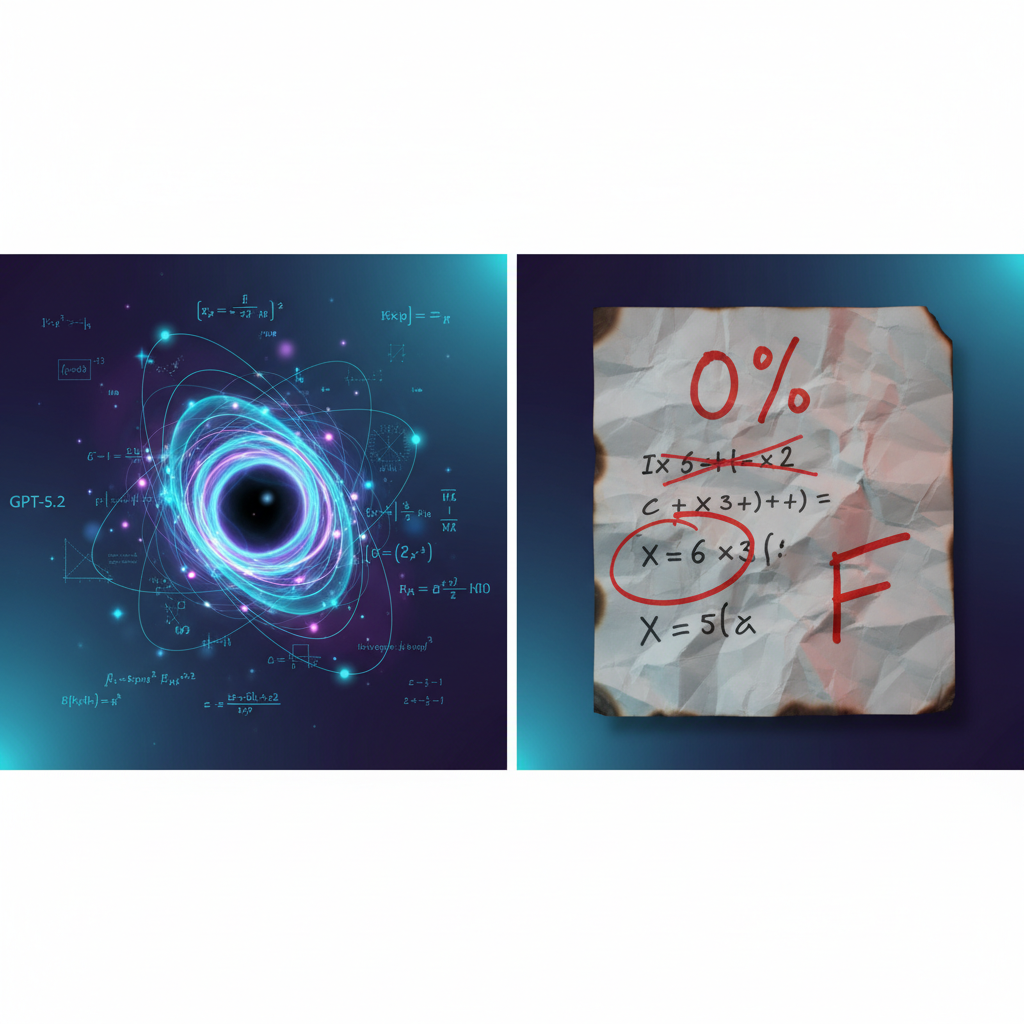

A model that cracks a problem 50+ physicists couldn’t — then bombs the exam. That’s not a bug. It’s the most important thing you need to understand about AI in 2026.

Last week, OpenAI announced that GPT-5.2 Pro derived a new result in theoretical physics: a closed-form formula for single-minus gluon scattering amplitudes. Researchers from the Institute for Advanced Study, Harvard, Cambridge, and Vanderbilt verified it. An internal scaffolded version then proved it in 12 hours.

The same model, at maximum reasoning effort, scored 0% on CritPt — a benchmark of 71 research-level physics problems designed by 50+ active researchers.

Zero.

TL;DR

- GPT-5.2 derived a formula for gluon scattering that physicist Nima Arkani-Hamed had investigated for 15 years — verified by researchers at IAS, Harvard, Cambridge, and Vanderbilt

- The same model scored 0% on CritPt, a 71-problem research physics benchmark, at maximum reasoning effort

- Pattern recognition ≠ first-principles reasoning — these are fundamentally different cognitive capabilities, and current AI excels at one while failing the other

- For practitioners: treat AI as a “refactoring engine” for complexity, not an independent reasoner — and never trust it without human verification

GPT-5.2 can see patterns across superexponentially complex data that humans can't — but it cannot reason from first principles. This isn't a temporary limitation. It's a fundamental characteristic of how these models work.

What Actually Happened

Here’s the chronology, because the details matter.

The Discovery

Nima Arkani-Hamed, one of the world’s foremost theoretical physicists at the Institute for Advanced Study, had spent 15 years curious about single-minus gluon tree amplitudes. Standard textbook arguments said these amplitudes should vanish — and for four decades, physicists accepted that.

Human researchers had manually calculated amplitudes for small values (up to n=6), producing increasingly complicated expressions. The complexity of these Feynman diagram expansions grows superexponentially with n. No one could find a simplifying pattern.

GPT-5.2 Pro did.

The model simplified those hand-calculated expressions and identified a general formula — the analogue of the famous Parke-Taylor formula for single-minus amplitudes. It found the “half-collinear regime” where momentum alignment allows non-zero amplitudes, exposing a loophole in what physicists had assumed for decades.

An internal scaffolded version of GPT-5.2 then spent 12 hours reasoning through the conjecture and produced a formal proof. The result was verified against the Berends-Giele recursion relation, cyclic symmetry, reflection symmetry, and Weinberg’s soft theorem. Researchers extended the approach from gluons to gravitons.

This is a 14-author paper with scientists from IAS, Harvard, Cambridge, Vanderbilt, and OpenAI. It’s real.

The Failure

On CritPt — a benchmark comprising 71 research-level physics challenges designed by over 50 active researchers across 30 institutions and 11 physics subfields — GPT-5.2 at maximum reasoning effort scored 0%.

Not 5%. Not 1%. Zero.

Why This Matters

The paradox reveals something fundamental: pattern recognition over superexponential complexity and first-principles reasoning from scratch are different cognitive capabilities. LLMs excel at the former. They fail at the latter.

This isn’t a “GPT-5.2 is bad” story. It’s the opposite — it made a genuine scientific discovery. But it tells us exactly how to use these models and when not to trust them.

For Researchers

We’ve crossed what the original article calls the “Erdős Threshold” — the point where AI models contribute publishable, peer-reviewed results to fundamental science. Not as independent researchers, but as collaborators that see patterns humans can’t.

The workflow that produced the gluon result was specific:

- Humans calculated base cases by hand

- AI simplified and identified general patterns

- AI produced a formal proof

- Humans verified through four independent methods

Remove any step, and the result doesn’t happen.

For Enterprise and Educators

If you’re evaluating AI capabilities, benchmarks alone will mislead you. A model that scores 0% on a physics benchmark just contributed to a breakthrough physics paper. The evaluation framework matters more than the score.

This has direct implications for:

- Enterprise AI adoption: Don’t benchmark your way to deployment decisions. Test on your actual workflows — especially the ones where the model will be given structured data and asked to find patterns

- Education: Standardized testing of AI capabilities is nearly meaningless. A student using GPT-5.2 could solve a research-level problem and fail the midterm. The tool is only as good as the scaffolding around it

- Hiring AI engineers: Look for people who understand when to deploy AI reasoning and when to verify independently, not people who can prompt their way to high benchmark scores

What to Do Next

1. Treat AI as a refactoring engine, not a reasoning engine. Give it base cases and ask it to generalize. Don’t ask it to reason from scratch. The gluon discovery worked because humans provided the computed values for n=1 through n=6, and the model found the pattern.

2. Build verification into every AI-assisted workflow. The research team used four independent mathematical checks. If your team is using AI outputs without systematic verification, you’re building on sand. Create a verification checklist for every AI-derived result that matters.

3. Stop relying on benchmarks for deployment decisions. CritPt gave GPT-5.2 a 0%. The actual research output produced a publishable discovery. If you’re choosing AI tools based on benchmark leaderboards, you’re optimizing for the wrong metric. Test on your actual use cases instead.

The Bottom Line

The models aren’t coming for your job. They’re coming for the parts of your job where pattern recognition across massive complexity is the bottleneck.

The question is: do you know which parts of your work are which?

This story is developing. The full research paper documents GPT-5 experiments across mathematics, physics, astronomy, computer science, biology, and materials science. We’ll cover additional findings as they’re independently verified.