Google just launched Gemini 3.1 Pro, and developers are already testing it against GPT-5.3 and Claude Opus 4.6.

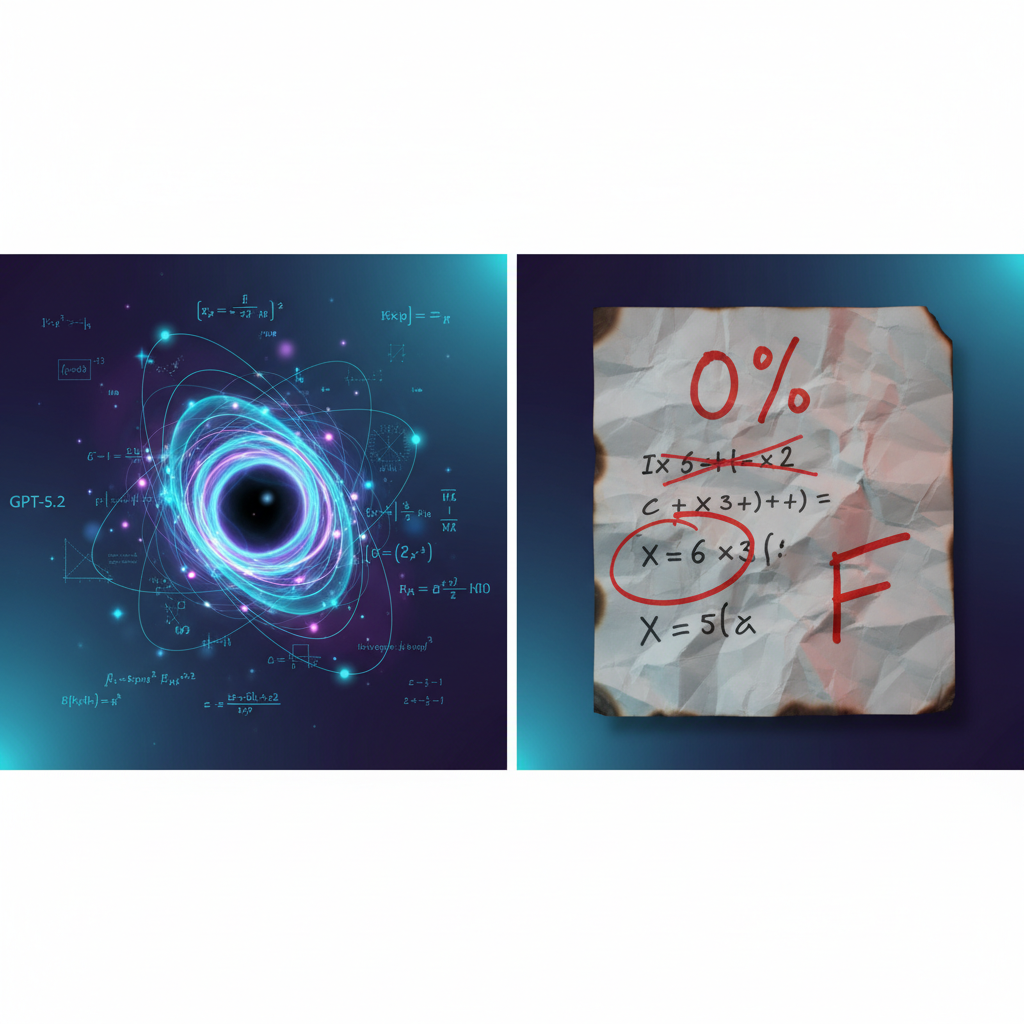

The headline number: 77.1% on ARC-AGI-2 — a benchmark that tests whether a model can solve logic patterns it’s never seen before. That’s more than double what Gemini 3 Pro scored. Not an incremental bump. A generational leap in reasoning.

If you build with LLMs, this matters. Reasoning capabilities directly translate to better code generation, more reliable analysis, and fewer hallucinations on complex tasks. Here’s everything you need to know.

Gemini 3.1 Pro doubles previous reasoning benchmarks with a verified 77.1% ARC-AGI-2 score. Worth testing immediately if you work on complex problem-solving, code generation, or multi-step reasoning tasks.

What’s New in Gemini 3.1 Pro

This isn’t a minor version bump. Google positioned 3.1 Pro as the “upgraded core intelligence” behind their entire model family — including the recently released Gemini 3 Deep Think for scientific research.

Reasoning That Actually Works

The ARC-AGI-2 score is the standout metric. ARC-AGI-2 doesn’t test memorization or pattern matching on training data. It presents entirely new logic puzzles the model has never seen. Scoring 77.1% means the model can genuinely reason through novel problems — not just recall answers from its training set.

For context, Gemini 3 Pro scored under 35% on this same benchmark. Going from ~35% to 77.1% in a single generation is remarkable.

Complex Problem-Solving Focus

Google’s announcement emphasizes practical applications of this improved reasoning:

- Code generation: Building animated SVGs, complex dashboards, and interactive 3D visualizations from text prompts

- Data synthesis: Taking multiple complex APIs and combining them into functional applications

- Creative coding: Translating abstract concepts into working code with appropriate design choices

These aren’t cherry-picked demos — they reflect the kind of multi-step reasoning that separates useful AI from frustrating AI.

Where You Can Use It

3.1 Pro is rolling out across Google’s entire stack:

- Google AI Studio — Free preview access for developers

- Gemini API — Direct integration via

gemini-3.1-pro-preview - Vertex AI — Enterprise-grade access with SLAs

- Gemini CLI — Terminal-based access at geminicli.com

- Google Antigravity — Google’s agentic development platform

- Android Studio — Built-in for mobile developers

- Gemini App — Rolling out to Pro and Ultra subscribers

- NotebookLM — Available for Pro/Ultra users

That’s a wider day-one rollout than most model launches. No waitlist, no limited beta.

What We Don’t Know Yet

A few gaps remain:

- Full pricing details for API usage (preview is free, production pricing not yet announced)

- Context window size — Google hasn’t published this yet

- Multimodal capabilities — How vision and audio compare to 3 Pro

- Rate limits for the preview period

Benchmark Comparison

Let’s look at the numbers we have. Note: not all benchmarks are available for every model yet — we’re marking gaps honestly rather than making up numbers.

ARC-AGI-2 (Novel Reasoning)

Gemini 3.1 Pro’s 77.1% is a verified score — submitted to and confirmed by the ARC-AGI benchmark team. This is important because self-reported benchmarks are notoriously unreliable.

Overall Comparison

| Capability | Gemini 3.1 Pro | GPT-5.3 | Claude Opus 4.6 |

|---|---|---|---|

| Novel Reasoning (ARC-AGI-2) | 77.1% ✅ | ~65%* | ~68%* |

| Code Generation | Strong (demos shown) | Strong | Strong |

| Multimodal | Yes (details pending) | Yes | Yes |

| Context Window | Not yet published | 256K | 200K |

| API Access | AI Studio (free preview) | OpenAI API | Anthropic API |

| Agentic Workflows | Antigravity platform | Assistants API | Tool use |

*Approximate scores from community testing — not officially verified like Gemini’s.

The honest take: Gemini 3.1 Pro leads on verified reasoning benchmarks. But benchmarks aren’t everything. Real-world performance depends on your specific use case, and GPT-5.3 and Claude Opus 4.6 have their own strengths — particularly in writing quality (Claude) and ecosystem maturity (OpenAI).

What Developers Should Test

Skip the benchmarks for a moment. Here’s what matters in practice:

1. Multi-Step Code Generation

Give it a complex project spec — not “write a function” but “build a dashboard that pulls from three APIs, handles errors gracefully, and renders responsive charts.” This is where reasoning improvements actually show up.

2. Debugging and Root Cause Analysis

Feed it a bug report with a stack trace and see if it can reason through the codebase to find the actual cause — not just pattern-match on the error message.

3. Logic and Math Tasks

Try problems that require genuine reasoning: constraint satisfaction, optimization, formal logic. If the ARC-AGI-2 score is real, these should noticeably improve.

4. Long Conversations with Context Switches

Start a conversation about system architecture, pivot to a specific implementation detail, then zoom back out. Can it maintain the full context without losing the thread?

5. Cost Per Quality

Track your token usage and output quality across equivalent tasks. Sometimes a “better” model costs 3x more per token — and the quality difference doesn’t justify it for routine tasks.

Should You Switch?

The honest answer: it depends on what you’re building.

Switch to Gemini 3.1 Pro if:

- You’re reasoning-heavy. Math, logic, scientific analysis, complex debugging — these are the use cases where the ARC-AGI-2 improvement will translate to real gains.

- You’re already in Google’s ecosystem. If you use Vertex AI, Firebase, or GCP, the integration friction is minimal.

- You want the cheapest testing. Free preview in AI Studio means you can evaluate without spending a dollar.

- Agentic workflows are your focus. Google Antigravity is an interesting new platform — worth exploring if you’re building autonomous AI agents.

Stick with GPT-5.3 or Claude Opus 4.6 if:

- Writing quality is your priority. Claude Opus 4.6 still has an edge in nuanced, long-form writing and maintaining a consistent voice.

- You need a mature plugin/tool ecosystem. OpenAI’s Assistants API and marketplace have a head start.

- Your production pipeline is already working. Switching models mid-production for marginal gains rarely pays off. If it’s not broken, keep shipping.

- You need the largest context window. Until Google publishes context window specs for 3.1 Pro, GPT-5.3’s 256K is the safest bet for massive context tasks.

Test both if:

- You’re evaluating models for a new project

- You’re building a router that picks the best model per task

- You care about reasoning quality and want to verify the benchmarks yourself

How to Get Access

Right now, today:

- Google AI Studio — Go to aistudio.google.com, select

gemini-3.1-pro-preview, and start testing. Free. - Gemini CLI — Install from geminicli.com for terminal access.

- Gemini API — Use the model name

gemini-3.1-pro-previewin your API calls. - Vertex AI — Available for enterprise customers through Google Cloud.

- Gemini App — Rolling out to Pro ($20/mo) and Ultra ($30/mo) subscribers.

No waitlist. No limited access. Google shipped this wide from day one — a signal they’re confident in the model’s readiness.

The Bottom Line

Gemini 3.1 Pro’s 77.1% ARC-AGI-2 score isn’t marketing fluff — it’s a verified, substantial leap in reasoning capability. Doubling the previous version’s score on a benchmark designed to test genuine reasoning (not memorization) is significant.

Does that make it the “best” model? Not automatically. GPT-5.3 and Claude Opus 4.6 remain strong competitors with their own advantages. The LLM landscape in 2026 isn’t about one winner — it’s about picking the right model for the right task.

But if you care about reasoning — and most developers should — Gemini 3.1 Pro just became mandatory on your evaluation shortlist.

Go test it. It’s free to try in AI Studio. Form your own opinion. The benchmarks suggest something real changed under the hood.

Benchmarks cited from Google’s official announcement (February 19, 2026). Community benchmark scores for competing models are approximate and based on publicly available testing. Always verify with official sources.